AI / Research Tool · Honda Research Institute × CMU · 2024

Aether

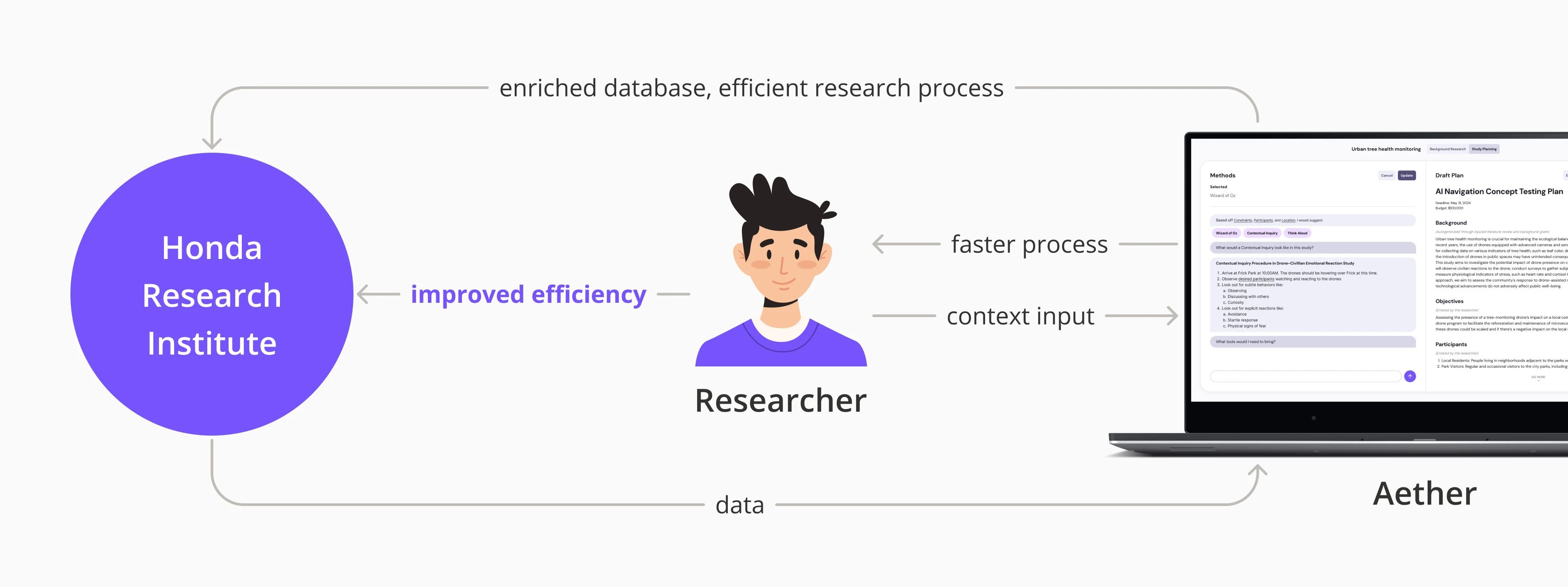

An LLM-powered research assistant for Honda's R&D scientists — built to compress months of literature review and field test planning into something a researcher could actually use between experiments.

Timeline

8 Months

Role

Interaction Design Lead

Team

5 Designers

Type

CMU MHCI Capstone

Brief

Honda Research Institute partnered with CMU's MHCI program through 99P Labs to explore “the future of Human-AI Teaming research.” Deliberately broad. Five of us — three designers, one researcher, one engineer — had eight months to figure out what that meant and ship something real. I led interaction design.

Problem

HRI researchers are brilliant scientists stuck in a manual workflow that hasn't changed in years.

A single field test proposal could take up to six months. Not because the science was complex — the planning process was. Literature review was unstructured, findings didn't carry over between projects, and every researcher had built their own private system of spreadsheets and folders.

The Reframe

The brief said “Human-AI Teaming.” The real problem was field testing.

We started where the brief told us to — broad exploration of how AI and humans collaborate. We interviewed CMU faculty, PhD students, and HRI researchers. Everyone had opinions about AI teaming in the abstract. But the sharpest pain was always in the same place: the months-long slog of planning and executing field tests.

That was our reframe. We stopped trying to solve “Human-AI Teaming” as a concept and started designing for the researchers doing the actual work — people who needed to go from a pile of papers to a field-ready test plan, and were drowning in the middle.

Research

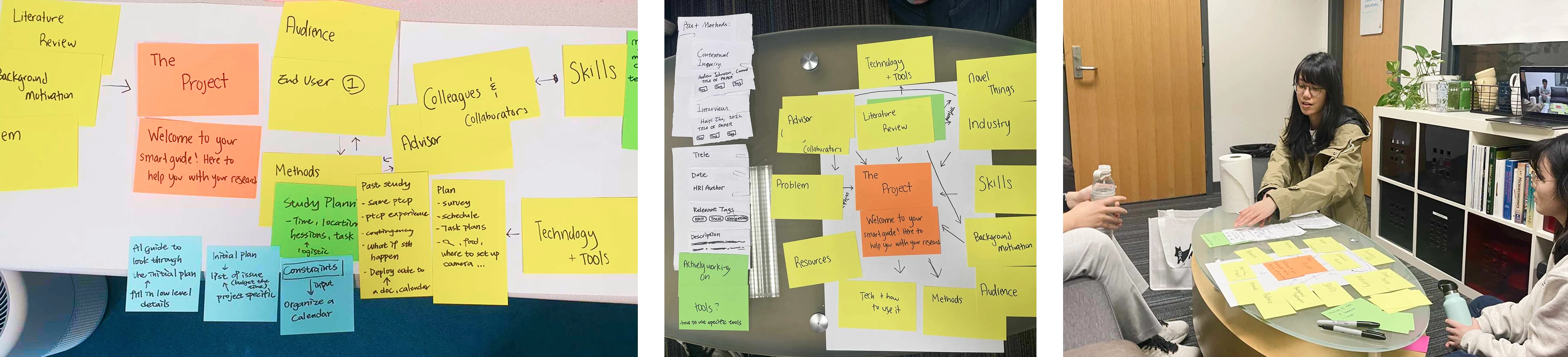

Expert interviews. Co-design sessions. A lot of humility.

We ran expert interviews with CMU faculty, PhD students, and HRI researchers to map the field testing workflow. Then co-design sessions to validate concepts directly with the people who'd use them. Designing for domain experts is humbling — they know more about their work than you ever will. The job isn't to tell them what to do. It's to remove the friction they've stopped noticing.

Researchers spent weeks on literature review before they could even frame a hypothesis — and most of that time was spent organizing, not reading.

Field test proposals could take up to six months. Not because the science was hard, but because the planning process was fragmented and manual.

Every researcher had their own system — Post-its, spreadsheets, folder hierarchies. Nothing was shared, and nothing carried over between projects.

Solution

An AI assistant that speaks researcher, not chatbot.

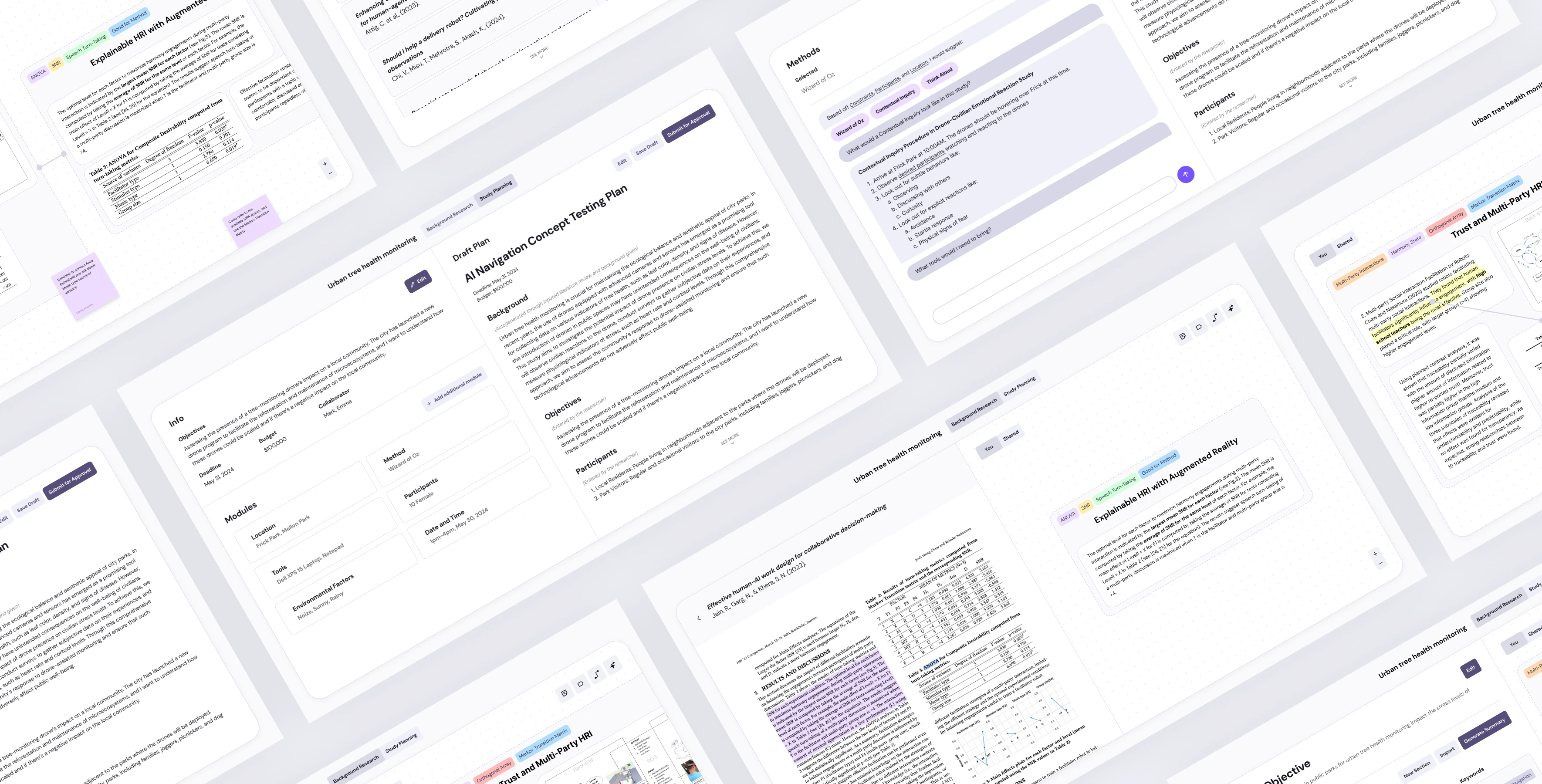

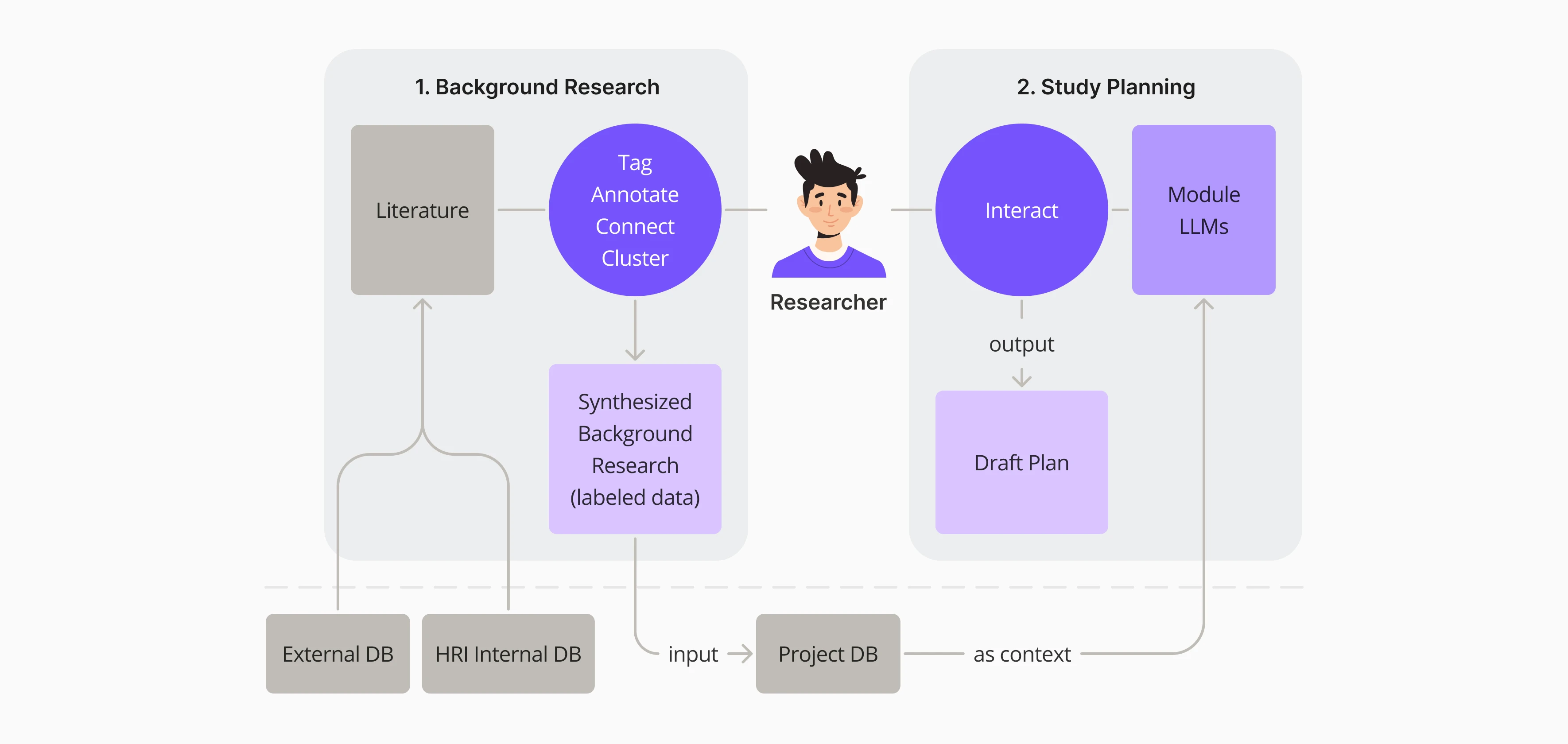

Aether is an LLM-powered research assistant fine-tuned on HRI's own body of work. It doesn't replace researchers — it handles the parts of the process that slow them down. Two modules, one workflow: go from a stack of papers to a field-ready test plan without losing weeks in between.

Module 01

Background Research

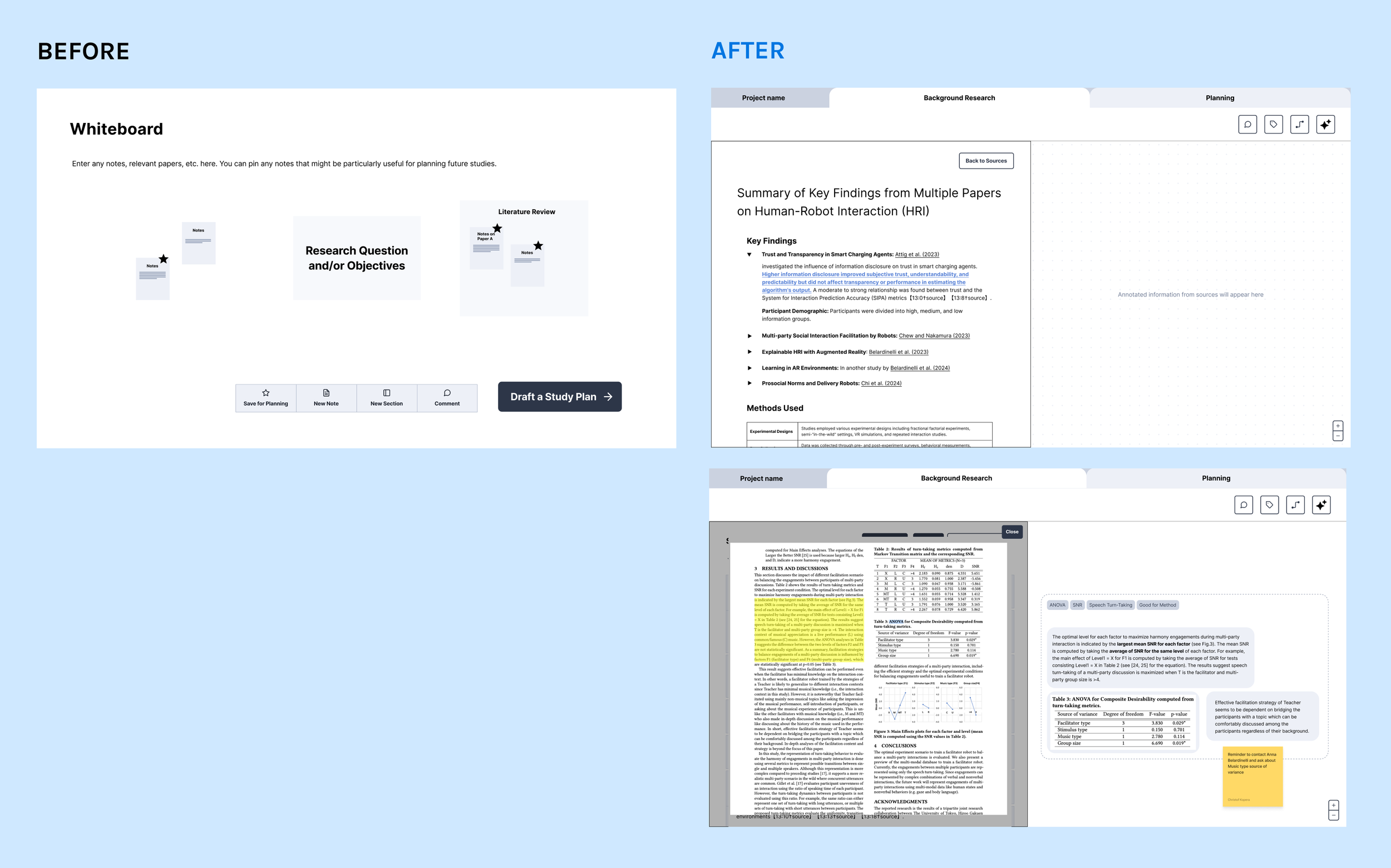

Import papers into Aether and it generates summaries, highlighting the most relevant information based on your research questions. Click any key finding to jump to the exact section of the original paper. Tag and annotate excerpts, then drag them onto a shared whiteboard to visually cluster and organize what you've learned.

Module 02

Study Planning

Feed in your variables and the research you've gathered. Aether generates a first draft of your field test plan — formatted to HRI conventions — covering location, equipment, procedures, and safety protocols. An expert assistant helps refine each section, and the system flags when changes in one module might affect another.

Iterations

Three rounds, each one sharper.

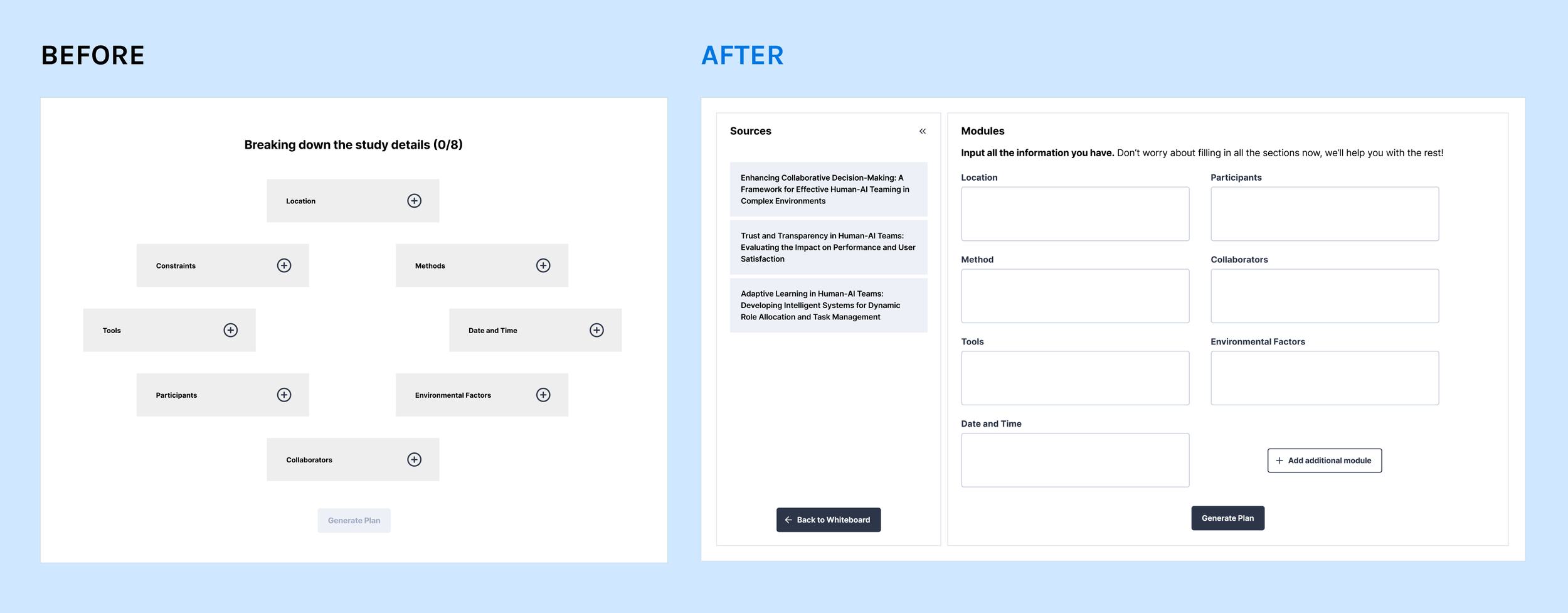

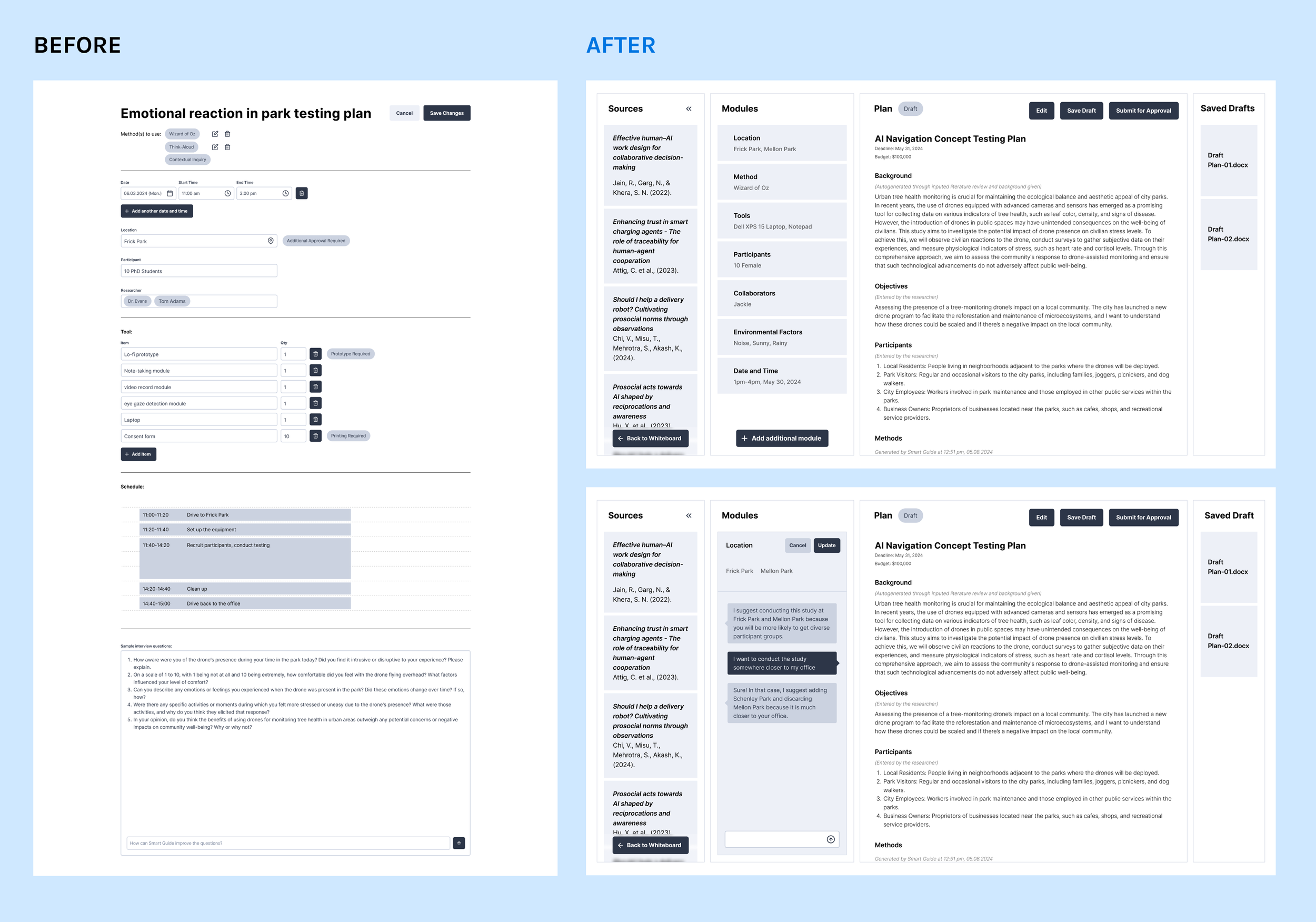

We iterated through multiple fidelity levels — from rough wireframes to interactive prototypes — testing with HRI researchers at each stage. Each round tightened the interaction model and stripped away what wasn't earning its place.

Early wireframes — mapping the research workflow

Mid-fidelity — background research module taking shape

High-fidelity — study planning with AI-generated drafts

Key Moments

What made this project different.

Designing for people who know more than you.

HRI researchers are domain experts with decades of experience. They don't need you to explain their workflow — they need you to see the friction they've normalized. The interviews that mattered weren't the ones where we asked good questions. They were the ones where we shut up and watched.

AI as material, not magic.

We were designing around an LLM that was still being fine-tuned. That meant constant negotiation between what we wanted the product to do and what the model could actually deliver. We scoped features around real capabilities, not demo-day fantasies. If the model couldn't reliably do something, we didn't ship it — we found a different interaction pattern.

The brief was a starting line, not a destination.

"The future of Human-AI Teaming" could have gone a hundred directions. It took three months of research to narrow it down to the thing that actually mattered — field test planning. If we'd committed to the first interesting idea, we'd have built the wrong product. The reframe was the most important design decision we made.

Outcome

HRI took it and kept building.

Prototype testing with HRI researchers validated the core concept — Aether's background research tool and AI-generated study plans were both recognized as genuine workflow improvements, not just capstone polish. Researchers praised the ability to go from imported literature to a structured, annotated knowledge base without switching tools.

After the capstone, Honda Research Institute's Ohio lab took ownership of Aether and continued development internally. For a student project, that's the best outcome you can ask for — someone wants to keep building the thing you made.

Reflection

The hardest part of designing with AI isn't the AI. It's the expectations.

Everyone walked in with a mental model of what an “AI assistant” should do — shaped by ChatGPT demos and sci-fi. Our researchers were no different. The real design work was managing the gap between what people imagined and what a fine-tuned LLM could reliably deliver. That meant saying no to features that would demo well but fail in practice, and finding interaction patterns that made the AI feel useful without overpromising.

The other thing I'd carry forward: when you're designing for experts, your job is to earn trust before you suggest change. We spent three months just understanding before we proposed anything. That patience is what made the final product something researchers actually wanted to use — not just something that looked good at a capstone showcase.